[080603]

Toke Barter from London based Radarstation approached us with an ambitious open mind and the roadmap for the Future Media & Technology division of BBC in hand.

Task: Transform the static strategic lines on paper into audience reactive visuals for the upcoming event to be held in the O2 Arena (aka The Millennium Dome) in London.

Conceptually anchored to the colored lines in the roadmap,

we developed a visual language articulated by algorithmically drawing line representations of text and imagery.

Visuals

The role of the visuals was to tie the event together by providing an encompassing visual style and give the audience an overview of the event. Content from the various presentations was to be remixed in the visuals with specific elements coming in and out of focus. The audience could participate via sms displayed as part of the visual landscape.

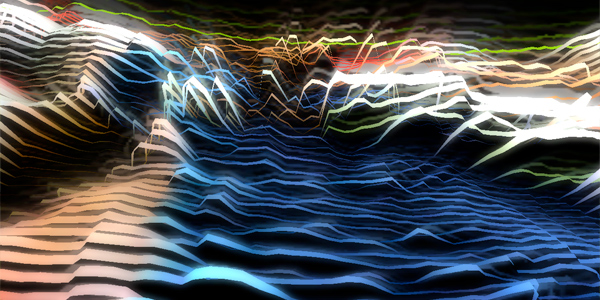

Line Landscape

The core of the visuals is the line landscape made up of a multitude of lines that together form a continuous deformable surface. The shape and color of the landscape is determined by image data loaded into the visuals at runtime and can be configured to display anything from the unedited image to an abstract colorful terrain. Colour data from the loaded images is used to control vertex coloring, heightmap and line width in the line landscape.

On top of the data from loaded images the line landscape is also sound reactive. Audio input is provided by a version of Jonathan Czeck's AudioInput plugin modified to expose the entire sound buffer to Unity. A Fast Fourier Transform is then applied to the signal using the Exocortex.DSP library. The sound is connected to the center of the landscape creating a ripple as it progresses outward to the edges.

Finally, a slow moving sine-wave gives a pulsating motion to the landscape keeping the surface 'alive' by continuously deforming it slightly. The sine-wave is implemented as a vertex shader in order take some of the computational burden off the CPU.

Camera & Effects

The ability to load any image into the landscape and displaying arbitrary text messages puts some requirements on the camera controls in terms of zooming and framing. Instead of exposing all this to the operator, the process of finding an optimal camera position is automated, greatly simplifying the task of controlling the system in real time. Framing a part of the landscape is simplified to the decision of doing so or not.

Even with hundreds of lines with hundreds of segments each, the extent of the line landscape is finite. To counter this and give the appearance of a landscape stretching into infinity we implemented video feedback as a post processing effect. Each rendered image is repeated as a copy of itself in the background, slightly offset, scaled or rotated, giving the appearance of a never ending surface of lines.

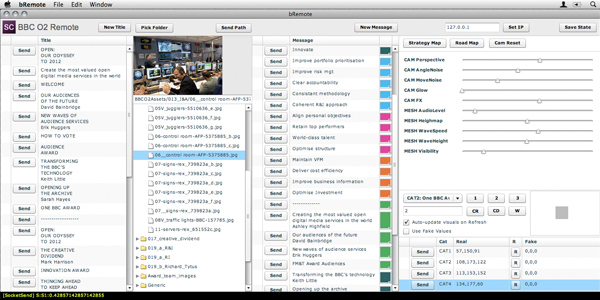

Controllers

Two small additional applications was built to facilitate the moderation of incoming SMS messages and control Titles, Imagery, Subtitles, Rendering parameters, Sequencing and SMS votes for the awards.

Implemented as lightweight Adobe Air applications built in FlexBuilder they communicate in realtime over a network socket connection to the dual Mac Pro's powering the rear-projected displays.

A hardware equalizer was used to provide fine grained control over the audio before it reached the FFT analysis.